The paradox is clean and uncomfortable. A brand spends years climbing to the top of Google search, achieves the coveted number one ranking for its most important keyword, and then watches as ChatGPT answers a question in that same category without mentioning the brand once. The ranking is real. The traffic is real. The AI invisibility is also real. And according to a large-scale study from NP Digital, this situation is far more common than most marketers currently understand.

Neil Patel, co-founder of NP Digital, put it directly in a recent breakdown of the firm's findings: "Right now, some brands are showing up constantly in ChatGPT answers. Others are completely invisible. And the brands that are invisible, a lot of them rank number one on Google." That is not an outlier observation. It is the central finding of a study that analyzed 500 commercial keywords and ran 4,338 related prompts through large language models to measure where citations actually come from.

The results represent a genuine rupture between two systems that SEO practitioners had long assumed were roughly correlated. They are not. Google and AI search engines are looking at the web through fundamentally different lenses, ranking sources by different signals, and pulling citations from largely different pools. Understanding that divergence is no longer optional for brands with any serious interest in being found.

The Citation Gap in Numbers

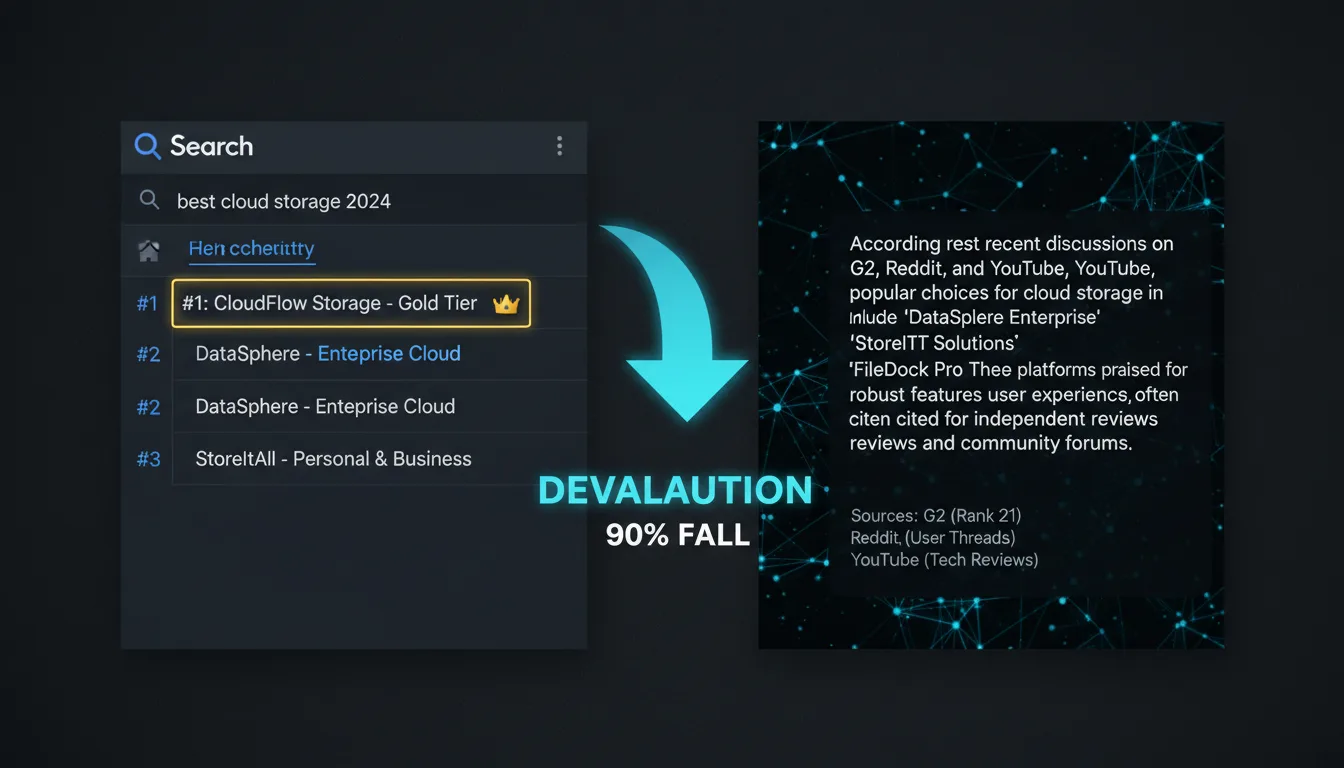

The NP Digital study quantified the divergence with precision. Ranking number one on Google produced a 31.4% AI mention rate across the prompts tested. That figure sounds reasonable until you look at what happens next. Rank two drops meaningfully. By rank four, the citation rate has collapsed to 2.6%. This is not a gentle gradient. It is a cliff, and it tells you that AI models are not using Google's ranked list as a proxy for authority.

The more striking statistic concerns sources that Google barely acknowledges at all. Ninety percent of the pages ChatGPT cites rank 21 or lower on Google. This means the vast majority of AI-cited content sits below the first two pages of traditional search results. The overlap between what Google ranks highly and what AI models cite was already imperfect. It is now moving toward divergence. Earlier data showed Google's top 10 accounted for 76% of ChatGPT citations. The current figure is 38%, and the trend is still falling. Meanwhile, 75% of all AI citations now come from sources that do not appear in Google's top results at all.

The Platform Scale Problem

The urgency of this shift becomes sharper when you look at the traffic volumes involved. ChatGPT now has 1.2 billion users and drives 78% of all large language model referral traffic. Gemini has 750 million users and has grown 5x in visitors since 2024, while ChatGPT grew only 1.64x in the same period. Meta AI has reached 1 billion users. Patel notes that barely any marketers are optimizing for Meta AI at all, which means an enormous audience is being left entirely unaddressed while teams pour resources into Google SEO refinements.

Each of these platforms draws from different source pools. ChatGPT, Gemini, Claude, and Perplexity do not share a unified index. Optimizing for one does not automatically carry over to the others. Brands that treat AI search as a single channel analogous to Google are misunderstanding the architecture of what they are trying to influence.

How AI Models Actually Retrieve Information

The NP Digital study also tracked how retrieval behavior has shifted across model versions, and the results reveal a rapidly evolving system. With GPT 5.3, only 8% of citations went to brand websites directly. With GPT 5.4, the premium thinking tier, that number jumped to 56%. That is a 7x increase in a single update. The mechanism behind that shift is also measurable: GPT 5.4 runs an average of 8.5 subqueries per prompt, while GPT 5.3 ran one. The model is now conducting something closer to multi-step research before composing an answer, and 37% of GPT 5.4's query types are site-specific operators, meaning it goes directly to a domain rather than surfacing results through a general index sweep.

Freshness signals are also being weighted differently than most SEO practitioners expect. Google cites content approximately 130 days old on average. ChatGPT's average is 80 days. Claude's is 62 days. GPT 5.2 pulled 33% of its citations from content published within the last 30 days. GPT 5.3 dropped that figure to 6%. The recency preference is strong but inconsistent across models and versions, which means treating content pages as static, permanent assets is a structural mistake in the AI search era.

"ChatGPT doesn't read the internet like traditional Google search does. It looks for definitive, well-structured identity relationships."

Frevana, AEO guide

AI Citation Platform Comparison

| Platform | Users | LLM Referral Share | Growth Since 2024 | Avg. Content Age Cited |

|---|---|---|---|---|

| ChatGPT | 1.2B | 78% | 1.64x | 80 days |

| Gemini | 750M | ~14% | 5x | ~90 days |

| Meta AI | 1B | Low | N/A | N/A |

| Claude | N/A | ~5% | N/A | 62 days |

Why Entity Association Beats Content Volume

One of the most counterintuitive findings from the NP Digital research involves the relationship between content output and AI visibility. Patel described a client that ranked number one for its main keyword, carried solid SEO metrics, and had strong domain authority. That client had zero AI citations. The reason: every piece of content the brand had ever produced lived on its own website. It had never built presence anywhere else. The AI models could not find corroborating evidence for the brand's authority from third-party sources, so they effectively did not recognize it as an authoritative entity on the topic.

The contrast case is instructive. A midsize software-as-a-service company that Patel cited had done minimal traditional SEO work. It had no elaborate content strategy and nothing unusual in its domain authority profile. But it was showing up consistently in ChatGPT answers. The reason was its presence across G2 reviews, Reddit discussions, YouTube explainer videos, and niche industry publications. AI models pull from all of those. The brand's identity in the model's understanding of the world was distributed, multi-source, and consistent across independent platforms.

This is the entity association principle. Google maintains a knowledge graph containing 54 billion real-world entities. If a brand is not clearly defined in that system, connected to a specific topic with third-party sources confirming the connection, it is effectively invisible to AI search regardless of its Google ranking. The question AI models are answering is not "who ranks highest for this keyword" but "who is most confidently associated with this topic across the entire web."

"The game has two boards now and most brands are only playing on one."

Neil Patel, NP Digital

Five Patterns the NP Digital Study Found

The two systems use different algorithms and different signals. High Google rank does not translate to AI visibility. The citation cliff between rank one and rank four confirms these are separate selection mechanisms.

Clear H2 structure, FAQ sections, and answers positioned near the top of the page all improve AI citation rates. Equally important: audit your robots.txt file for accidental blocks on GPTbot and Perplexitybot, which affect whether AI crawlers can reach your content at all. A scan of 140 million websites found nearly 6% were accidentally blocking AI crawlers.

ChatGPT, Gemini, Claude, and Perplexity each pull from different source pools. Optimization for one does not carry over to the others. Brands need a cross-platform presence strategy rather than a single-channel approach.

What matters is not how much content a brand has published but how confidently the AI model can connect that brand to a specific topic across the whole web: Wikipedia entries, industry reports, podcast appearances, third-party reviews, and analyst mentions all contribute. On-site content alone is not sufficient.

AI models have a measurable preference for recent content, with average citation ages ranging from 62 to 130 days depending on the platform. Content pages should be treated as living documents with quarterly refresh cycles rather than permanent static assets.

The AEO Playbook: A Different Discipline

What the NP Digital findings describe is a new discipline that practitioners are beginning to call Answer Engine Optimization, or AEO. The workflow is meaningfully different from Google SEO. It starts with prompt research rather than keyword research, tracking how users phrase questions to AI assistants rather than what they type into a search box. It requires answer tracking across multiple AI platforms simultaneously. It demands a structured identity audit that evaluates how consistently a brand is referenced across independent sources. And it involves active management of a brand's presence in the places AI models treat as authoritative: Wikipedia, G2, Reddit, Trustpilot, YouTube, industry analyst reports, and editorial media.

The mistake Patel flags most consistently is the response many brands have already defaulted to. Sixty-eight percent of marketers responding to the AI search challenge are publishing listicles on their own site and listing themselves as the best option. That approach builds neither real authority nor the kind of distributed entity association that AI models require. It is a Google SEO instinct applied to a problem that requires a different framework entirely.

One additional signal worth watching: OpenAI is now rolling out a self-service ad manager for the US market. Brands that move into that channel early will have first-mover positioning in a placement environment with 1.2 billion users and no established bidding competition. The analogy to early Google AdWords is imperfect but not frivolous.

What the Two-Board Game Requires

The practical takeaway from the NP Digital research is not that Google SEO is worthless. It is that Google SEO is now one of two boards, and most brands are playing only on that one. A number one Google ranking remains valuable for the portion of users who still use traditional search. It also still influences AI citation rates at the margin. But it no longer comes close to guaranteeing visibility in the fastest-growing information channel on the web.

The brands currently winning on both boards share a common trait: they have built distributed identity across independent platforms, structured their on-site content to answer specific questions directly, maintained freshness through regular content updates, and ensured AI crawlers can actually access their pages. None of those steps require abandoning Google SEO. They do require treating it as part of a larger system rather than the system itself.

The data from 4,338 prompts is unambiguous. The number one Google rank still opens a door, but in a world where 90% of AI-cited content sits below rank 21, that door leads to a smaller room than it used to. The question for every brand operating in this environment is whether they are building presence on both boards, or optimizing with increasing sophistication for only one.